Before you start reading this article let me confirm that I am not a guru in database designing. The below 11 points which are listed are points which I have learnt via projects, my own experiences and my own reading. I personally think it has helped me a lot when it comes to DB designing. Any criticism welcome.

The reason why I am writing a full blown article is, when developers sit for designing a database they tend to follow the three normal forms like a silver bullet. They tend to think normalization is the only way of designing. Due this mind set they sometimes hit road blocks as the project moves ahead.

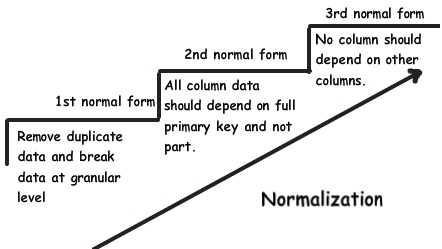

In case you are new to normalization, then click and see 3 normal forms in action which explains all three normal forms step by step.

Said and done normalization rules are important guidelines but taking them as a mark on stone is calling for troubles. Below are my own 11 rules which I remember on the top head while doing DB design.

Rule 1:- What is the Nature of the application(OLTP or OLAP)?

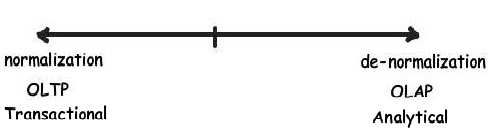

When you start your database design the first thing to analyze is what is the natureof theapplication you are designing for, is it Transactional or Analytical. You will find many developers by default applying normalization rules without thinking about the nature of the application and then later getting in to performance and customization issues. As said there are 2 kinds of applications transaction based and analytical based,let's understand what these types are.

Transactional: - In this kind of application your end user is more interested in CRUD i.e. Creating, reading, updating and deleting records. The official name for such kind of database is called as OLTP.

Analytical: -In these kinds of applications your end user is more interested in Analysis, reporting, forecasting etc. These kinds of databases have less number of inserts and updates. The main intention here is to fetch and analyze data as fast as possible. The official name for such kind of databases is OLAP.

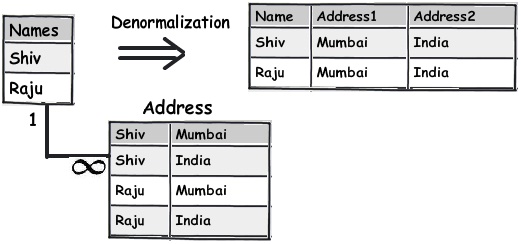

So in other words if you think insert, updates and deletes are more prominent then go for normalized table design or else create a flat denormalized database structure.

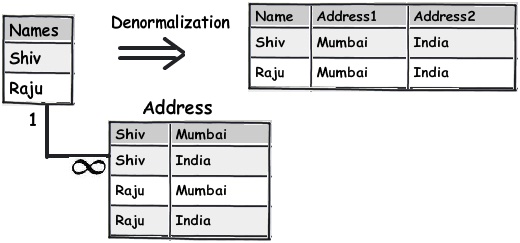

Below is a simple diagram which shows how the names and address in the left hand side is a simple normalized table and by applying denormalized structure how we have created a flat table structure.

Rule 2:- Break your data in to logical pieces, make life simpler

This rule is actually the 1st rule from 1st normal formal. One of the signs of violation of this rule is if your queries are using too many string parsing functions like substring, charindexetc , probably this rule needs to be applied.

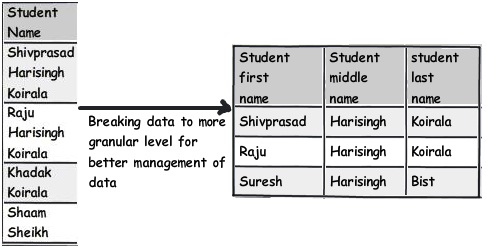

For instance you can see the below table which has student names , if you ever want to query student name who is having "Koirala" and not "Harisingh" , you can imagine what kind of query you can end up with.

So the better approach would be to break this field in to further logical pieces so that we can write clean and optimal queries.

Rule 3:- Do not get overdosed with rule 2

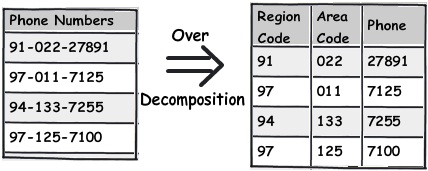

Developers are cute creatures. If you tell them this is the way, they keep doing it; well they overdo it leading to unwanted consequences. This also applies to rule 2 which we just talked above. When you think about decomposing, give a pause and ask yourself is it needed. As said the decomposition should be logical.

For instance you can see the phone number field; it's rare that you will operate on ISD codes of phone number separately(Until your application demands it). So it would be wise decision to just leave it as it can lead to more complications.

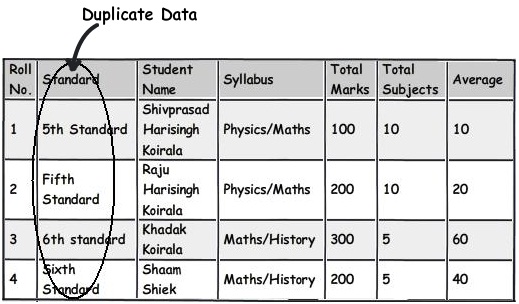

Rule 4:- Treat duplicate non-uniform data as your biggest enemy

Focus and refactor duplicate data. My personal worry about duplicate data is not that it takes hard disk space, but the confusion it creates.

For instance in the below diagram you can see "5th Standard" and "Fifth standard" means the same. Now you can say due to bad data entry or poor validation the data has come in to your system. Now if you ever want toderive a report they would show them as different entities which is very confusing from end user point of view.

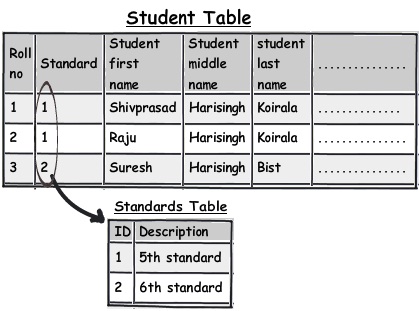

One of the solutions would be to move the data in to a different master table altogether and refer then via foreign keys. You can see in the below figure how we have created a new master table called as "Standards" and linked the same using a simple foreign key.

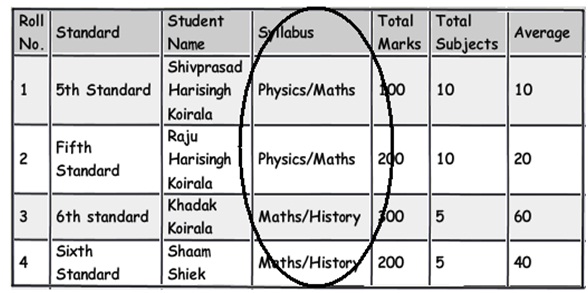

Rule 5:- Watch for data separated by separators.

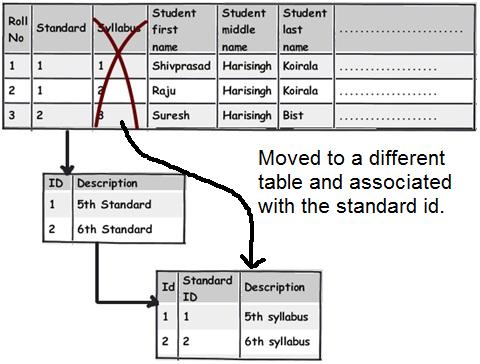

The second rule of 1st normal form says avoid repeating groups. One of the examples of repeating groups is explained in the below diagram. If you see the syllabus field closely, in one field we have too much data stuffed.These kinds of fields are termed as "Repeating groups". If we have to manipulate this data, the query would be complex and also I doubt performance of the queries.

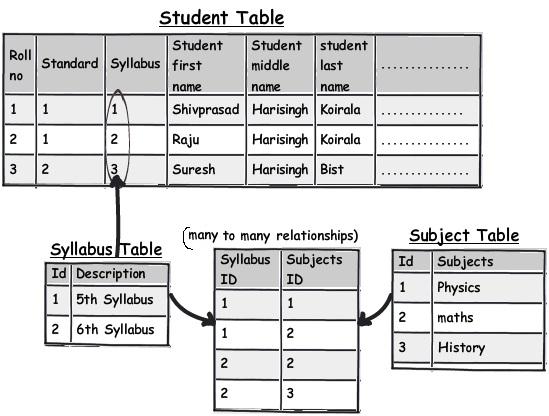

These kinds of columns which have data stuffed with separator's need special attention and a better approach would be to move that field to a different table and link the same with keys for better management.

So now let's apply the second rule of 1st normal form "Avoid repeating groups". You can see in the above figure I have created a separate syllabus table and then made a many-to-many relationship with the subject table.

With this approach the syllabus field in the main table is no more repeating and having data separators.

Rule 6:- Watch for partial dependencies.

Watch for fields which are depending partially on primary keys. For instance in the above table we can see primary key is created on roll number and standard. Now watch the syllabus field closely. Syllabus field is associated with a standard and not with a student directly (roll number).

Syllabus is associated with the standard in which the student is studying and not directly with the student. So if tomorrow we want to update syllabus we have to update for each student which is pain staking and not logical. It makes more sense to move these fields out and associate them with the standard table.

You can see how we have move the syllabus field and attached the same to standards table.

This rule is nothing but second normal form "All keys should depend on the full primary key and not partially".

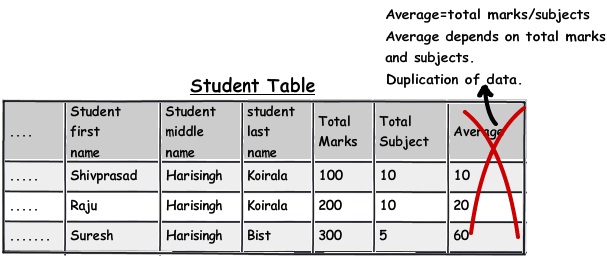

Rule 7:- Choose derived columns preciously

If you are working on OLTP applications must be getting rid of derive columns would be good thought, until there is some pressing reason of performance. In case of OLAP where we do lot of summations, calculations these kinds of fields are necessary to gain performance.

In the above figure you can see how average field is dependent on marks and subject. This is also one of form of redundancy. So for such kind of fields which are derived from other fields give a thought are they really necessary.

This rule is also termed as 3rd normal form "No columns should depend on other non-primary key columns". My personal thought is do not apply this rule blindly see the situation; it's not that redundant data is always bad. If the redundant data is calculative data , see the situation and then decide do you want to implement the third normal form.

Rule 8:- Do not be hard on avoidingredundancy, if performance is the key

Do not make it a strict rule that you will always avoid redundancy. If there is a pressing need of performance think about de-normalization. In normalization you need to make joins with many table and in denormalization the joins reduces and thus increasing performance.

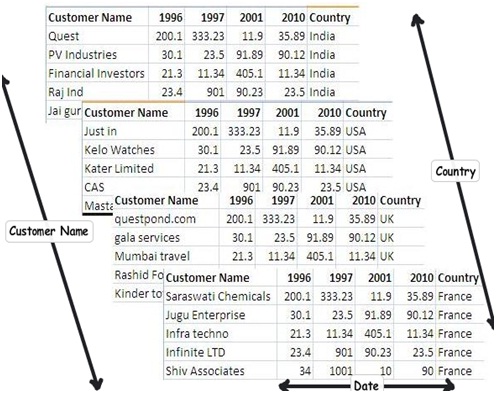

Rule 9:- Multidimensional data is a different beast altogether

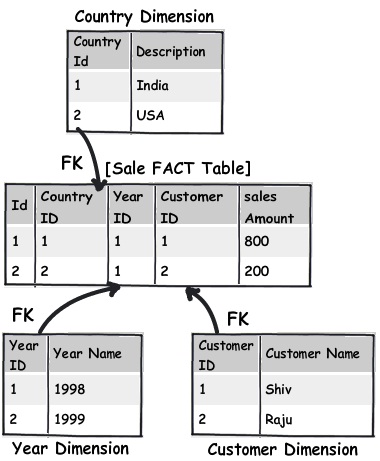

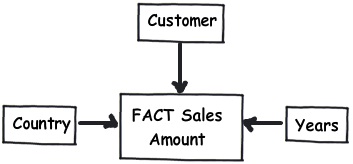

OLAP projects mostly deal with multidimensional data. For instance you can see the below figure, you would like to get sales as per country, customer and date. In simple words you are looking at sales figure which have 3 intersections of dimension data.

For such kind of situations a dimension and fact design is a better approach. In simple words you can create a simple central sales fact table which has the sales amount field and he makes a connection with all dimension tables using a foreign key relationship.

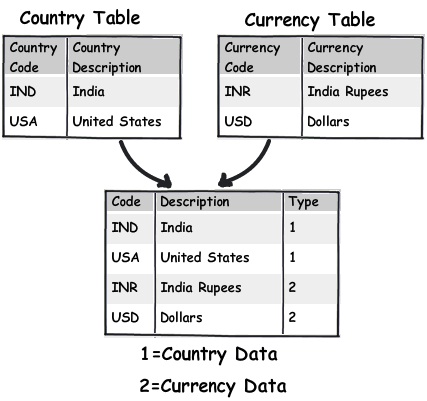

Rule 10:- Centralize name value table design

Many times I have come across name value tables. Name and value tables means it has key and some data associated with the key. For instance in the below figure you can see we have currency table and country table. If you watch the data closely they actually only have Key and value.

For such kind of table creating one central table and differentiating the data by using a type field makes more sense.

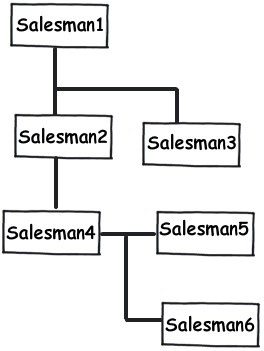

Rule 11:- For unlimited hierarchical data self-reference PK and FK

Many times we come across data with unlimited parent child hierarchy. For instance consider a Multi-level marketing scenario where one sales person can have multiple sales people below them. For such kind of scenarios using a self-referencing primary key and foreign key will help to achieve the same.

This article is not meant to say that do not follow normal forms , but do not follow them blindly , look at your project nature and type of data you are dealing with.

Out of full respect, below is a video which explains 3 normal forms step by step using a simple school table.